I like how easy it is to create an async streaming endpoint with django-bolt and PydanticAI from scratch. With only a few commands you can set it up.

Start a Django project

Use uv to quickly create a new Django project:

# Create a fresh Django project

uvx --from django django-admin startproject my_django_agent

cd my_django_agent

# Install django-bolt and pydantic-ai

uv init --no-package

uv add django django-bolt pydantic-ai

# add django-bolt to INSTALLED_APPS

vim my_django_agent/settings.py

Create an API endpoint

Create a streaming API endpoint that uses a Pydantic AI agent to generate responses:

# file: my_django_agent/api.py

from django_bolt import BoltAPI

from django_bolt.responses import StreamingResponse

from pydantic_ai import Agent

api = BoltAPI()

agent = Agent(

"anthropic:claude-haiku-4-5",

instructions="Be concise, reply with one sentence.",

)

@api.get("/chat")

async def chat(prompt: str = "Where does 'hello world' come from?"):

async def content():

async with agent.run_stream(prompt) as result:

async for chunk in result.stream_text(delta=True):

yield chunk

return StreamingResponse(content(), media_type="text/plain")

We use Claude here, but you can use any model supported by PydanticAI.

Test it out

Set ANTHROPIC_API_KEY in your environment:

export ANTHROPIC_API_KEY="your_anthropic_api_key_here"

Run the server:

uv run python manage.py runbolt --dev

Then curl the endpoint:

$ curl -N http://127.0.0.1:8000/chat

And watch the response stream in real time:

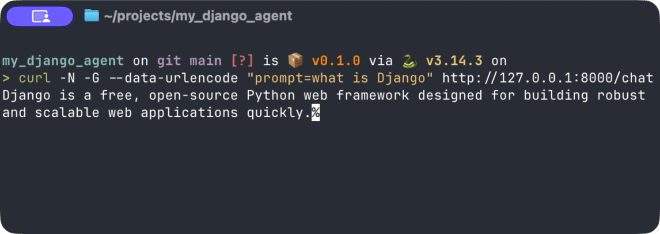

curl demo in Ghostty terminal (slowed it down by 2x). Hover over video for playback controls.Or prompt it with a question:

$ curl -N -G --data-urlencode "prompt=what is Django" http://127.0.0.1:8000/chat

Django is a free, open-source Python web framework designed for building robust and scalable web applications quickly.%

Obviously this is a very simple example, but I think the simplicity can go a long way for people considering using Django for new LLM-related projects, since it’s already really easy with FastAPI. In fact, many examples on PydanticAI’s documentation, such as the frontend UI examples, use FastAPI, but it’s easy to do the same thing with Django-Bolt. And then you’ll have all of Django’s batteries included available, such as the admin interface, ORM, and unit testing framework. Options are nice to have!